Crawlability and indexability are often used together, but they are not the same thing. Crawlability asks whether a crawler can reach a URL. Indexability asks whether the page can be included in a search engine’s index after it has been found. A page can be crawlable but not indexable. A page can also be blocked from crawling yet still appear as a URL-only result if other pages link to it. This is why basic technical SEO becomes confusing when site owners treat crawling and indexing as one event.

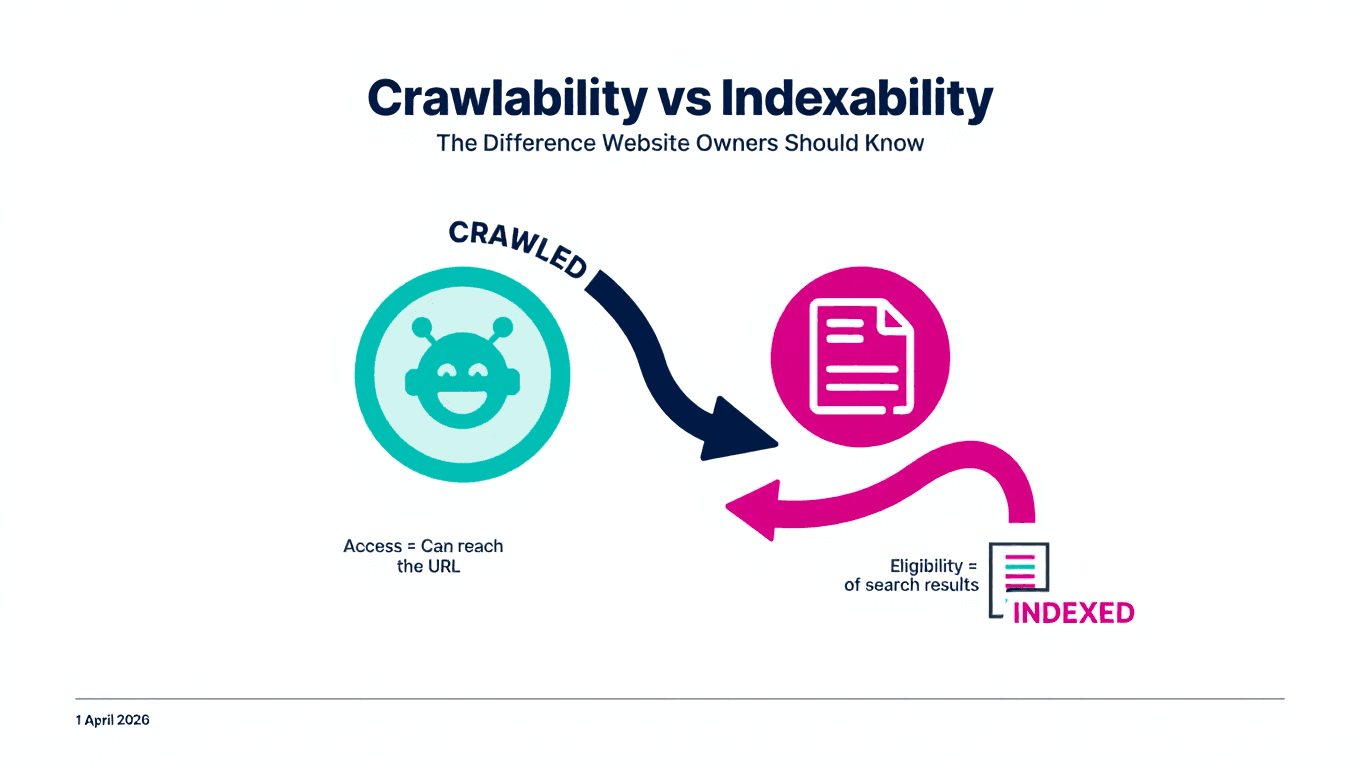

A practical way to think about it is this: crawlability is access, indexability is eligibility. Access comes first. A crawler needs a path to the page, a working URL, and permission to fetch it. Eligibility comes next. The page should return a useful status code, avoid blocking indexing with a noindex directive, point to the right canonical URL, and contain content worth storing. If you are new to the topic, start with what crawlability means before reviewing indexability signals.

Robots.txt is one of the main places where people mix up the two concepts. A robots.txt disallow rule tells compliant crawlers not to fetch a path. It does not work as a reliable removal method for normal web pages. Google’s robots.txt documentation says a page blocked by robots.txt can still be indexed if it is linked from other sites. That does not mean blocking is useless. It means robots.txt controls crawling access, while noindex controls indexing when the crawler is allowed to see the directive.

Noindex works differently. A noindex directive tells search engines that support the rule not to index the page. Google documents the common implementation as a meta robots tag in the page head or as an X-Robots-Tag HTTP header for some file types. The key detail is that the crawler needs to access the page or file response to see the directive. If you block the URL in robots.txt, the crawler may not be able to read the noindex instruction. This is a common source of technical mistakes.

Canonical tags are another indexability signal, but not an absolute command. A canonical URL suggests the preferred version of duplicate or near-duplicate content. Google explains that canonicalization is the process of selecting the representative URL from duplicate pages: Google Search Central on canonicalization. If your canonical tag points to another URL, you are effectively telling search engines that another page should represent this content. That can be correct for duplicate versions, but harmful if used by accident.

For small sites, the safest checklist is short. Is the page linked from other crawlable pages? Does it return 200? Is it missing from robots.txt blocks? Does it avoid noindex? Does it self-canonicalize unless there is a clear duplicate reason? Is it included in the sitemap? Does it contain original, useful content? None of these checks guarantees ranking, but together they reduce technical reasons a page might be ignored.

The main lesson is to separate the two questions. First ask, can a crawler reach this page? Then ask, should a search engine index this page? That difference helps you debug faster, write cleaner technical documentation, and avoid fixes that solve the wrong problem.